Module 2 - Session 4: Training and Evaluating Your Classifier

Session 4

Setting Up Training

Everything you need:

- Model (on device)

- Loss function

- Optimizer

- Data loaders

Device and Model Setup

Both model and data must be on the same device

Loss Function

Designed for classification tasks

Perfect for choosing a digit from 0-9

Optimizer

Adam: adapts learning rate as it trains

- Larger adjustments early (noisy gradients)

- Smaller corrections later (training stabilizes)

Training Function

def train_one_epoch(model, dataloader, loss_fn, optimizer, device):

model.train() # Set to training mode

running_loss = 0.0

correct = 0

total = 0

for batch_idx, (data, targets) in enumerate(dataloader):

# Move to device

data, targets = data.to(device), targets.to(device)

# Training steps

optimizer.zero_grad()

outputs = model(data)

loss = loss_fn(outputs, targets)

loss.backward()

optimizer.step()

# Track progress

running_loss += loss.item()

_, predicted = outputs.max(1)

total += targets.size(0)

correct += predicted.eq(targets).sum().item()

if batch_idx % 100 == 0:

print(f'Loss: {loss.item():.4f}, '

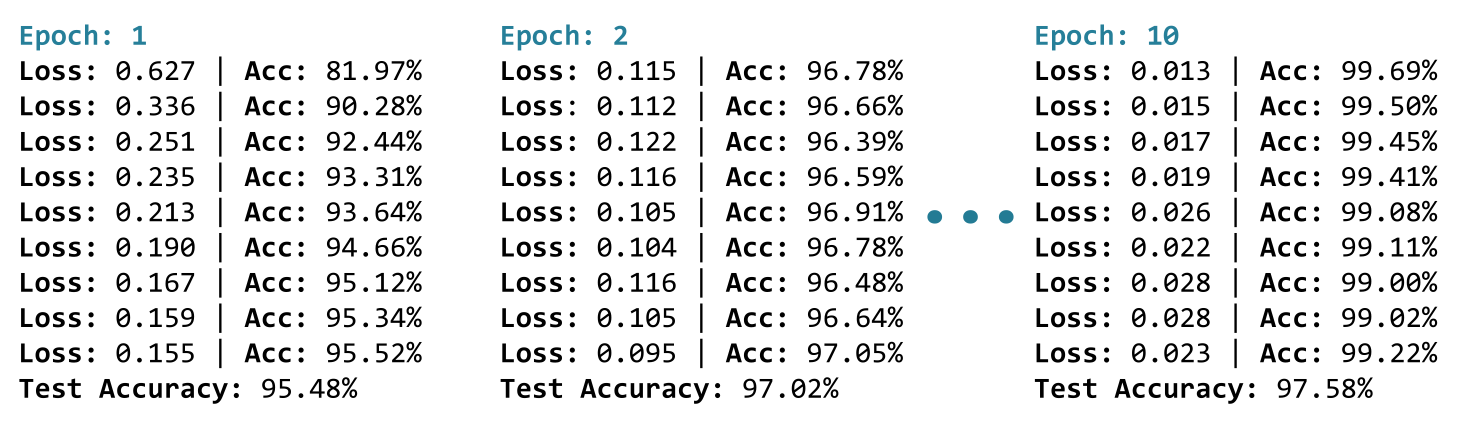

f'Accuracy: {100.*correct/total:.2f}%')Understanding the Training Progress

With 60,000 training images and batch size 64:

- About 938 batches per epoch

- Around 9 progress updates

Watch the numbers:

- Loss: dropping (0.64 → 0.17)

- Accuracy: climbing (81% → 95%)

Evaluation Function

def evaluate(model, dataloader, device):

model.eval() # Set to evaluation mode

correct = 0

total = 0

with torch.no_grad(): # Disable gradient tracking

for data, targets in dataloader:

data, targets = data.to(device), targets.to(device)

outputs = model(data)

_, predicted = outputs.max(1)

total += targets.size(0)

correct += predicted.eq(targets).sum().item()

return 100. * correct / totalPutting It All Together

10 epochs = 10 full passes through training data

After each epoch: evaluate on test set

What You’ll See

By epoch 10: Loss: tiny, Accuracy: high (often 95%+)

When accuracy stops improving: Model is done learning, May not need all 10 epochs

Module 2 Summary

You’ve learned:

- PyTorch data pipeline (Dataset, DataLoader, Transforms)

- Building custom models with

nn.Module - Loss functions (MSE vs Cross-Entropy)

- How optimizers use gradients to update weights

- Device management (CPU vs GPU)

- Complete image classification pipeline

Lab 1: Building Your First Image Classifier

“Don’t tell me the moon is shining; show me the glint of light on broken glass.”

CUE: START THE LAB HERE

Assignment 2: EMNIST Letter Detective

“The eye sees only what the mind is prepared to comprehend.”

CUE: START THE ASSIGNMENT HERE

What’s Next?

In Module 3: Data Management we learn:

- How to manage and preprocess data for deep learning

- How to build a robust data pipeline

- How to use data augmentation to improve model performance

- How to use data validation to ensure model performance